It’s always entertaining and informative to watch Microsoft Research turn science fiction into science fact.

As no doubts most people have probably seen the infamous KinectFusion video demo by now whereby objects can be surface-mapped in real-time using a handheld Kinect sensor. Now, the rocket science behind exactly what voodoo magic (algorithms) the software performed is detailed has been published.

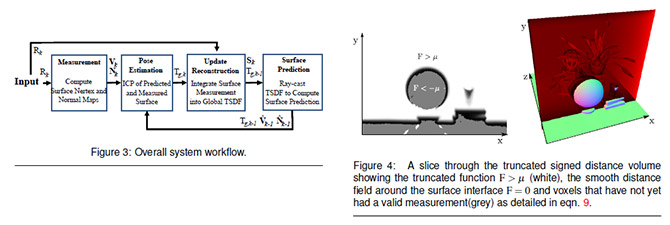

The research paper “KinectFusion: Real-Time Dense Surface Mapping and Tracking” co-authored by no less than 10 researchers across Microsoft Research and three universities goes into the detail of their “system for accurate real-time mapping of complex and arbitrary indoor scenes in variable lighting conditions, using only a moving low-cost depth camera and commodity graphics hardware.”

Since there are mathematical equations in this paper that looks like straight out of a Hollywood sci-fi movie, I can’t claim to understand half the algorithms mentioned.

However I can comprehend, the unique advantage of their system appears to be the ability to grow the model and its details with time and movement in contrast to a single-frame capture. Of course to achieve this they had to overcome a number of difficulties including but not limited to motion drift, tracking and camera pose estimation.

It’s also noted the system is currently optimized for medium-sized rooms. To expand the system to much larger objects, like an interior of a whole building, will present a number of challenges in both memory and movement tracking but not without possible solutions.

Nevertheless like the authors themselves note, this technology presents interesting opportunities, specifically in the field of augmented reality where their real-time detailed and robust system surpasses any previous solution of equal cost and availability. The recently revealed HoloDesk concept is one such implementation.

I Can’t Understand a Thing

Maybe Explain it in Layman Term Long ?

I love reading anything about science when it has the term “mad science” in it, especially when its to do with Microsoft! 😀