In early 2012 it seemed like everyone jumped on the Kickstarter bandwagon. At TechCrunch Disrupt San Francisco 2012 I was introduced to a project called Memoto, a miniature camera small enough to clip on to your clothing and it’ll automatically take photos of your day-to-day life – lifelogging.

At the time it seemed like a diamond in the rough (remember this was before Google Glass was announced). It exceeded its $50,000 goal with $550,000 of backers. Fast forward to today, after a year of production delays and a company rebrand, the Narrative Clip has finally started shipping.

The design

The Narrative Clip takes the wear in wearables quite literally. Inside a plastic case about half a business card sized is a 5MP camera, a GPS, accelerometer, accelerometer, magnetometer and 8GB of memory. It’s a good example of minimalist Scandinavian design and engineering that weighs less than a pack of gum.

A small pinhole opening on the frontside is the camera lens. A metallic clip at the back allows the device to grip on to most pieces of clothing – shirts, pockets, hats and anything with an edge.

Since there’s no buttons on the device a 4-light LED indicates battery life and a microUSB port (with rubber cover) charges and syncs. The front surface is touch sensitive so you can double tap to force it to take a photo and show battery life. Without a power button, placing the Clip face down or in a totally dark place (like a bag) will put it in sleep mode.

Although you’re suppose to wear a wearable, the Clip can stand vertically on any of its four sides and the user guide actually encourages people to use the camera as a time-lapse tool to capture the clouds outside a window as a neat secondary use for the device.

The camera

The Clip automatically takes a photo every 30 seconds (changing the interval is coming in a future firmware update). Needless to say the camera in a lifelogging wearable is pretty important but unfortunately the camera in the Clip leaves a lot to be desired.

The most significant issue is the field of view. At only just 70 degrees, the photos are basically half of what the human eye sees (approx 120 degrees). The camera on Google Glass on the other hand is fantastic with a wide-angle lens (no official FOV but I predict around 100-120).

One redeeming factor about the camera is the ability to automatically correct the tilt of the photo. Due to the flexible design of the Clip, in a lot of scenarios it will be attached to clothing at an angle and also taking photos at an angle. Of course photos at an angle doesn’t make for stunning pictures.

Thanks to the sensors built-in to the device, each photo has some metadata which the Narrative cloud servers process and make adjustments to the rotation of each photo so it is more consistently levelled with the horizon.

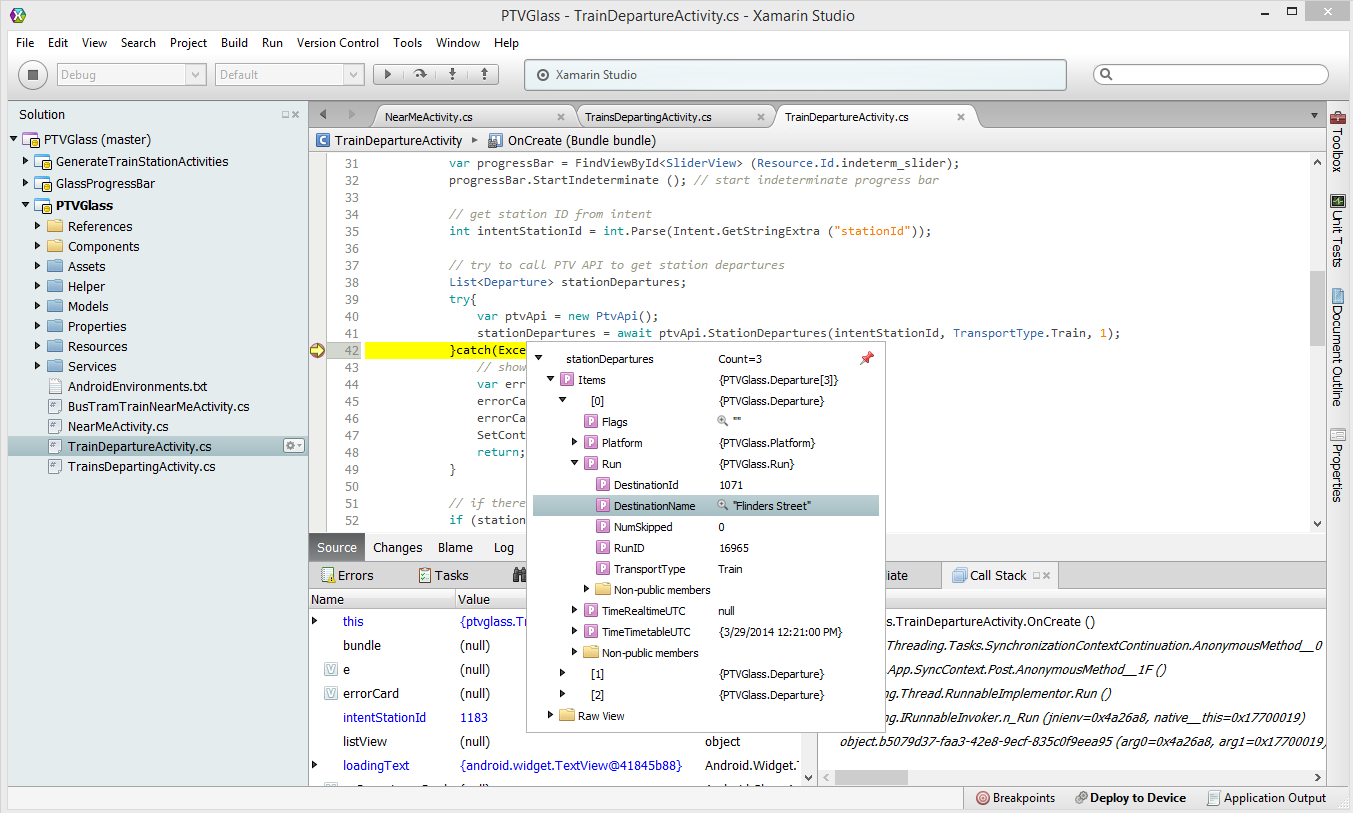

The app

The Clip is actually pretty useless without two companion apps: an uploader on a computer and a viewer on the phone.

The uploader program for Windows and OS X allows the Clip to sync its photos to the computer, the cloud or a combination of the two.

The Narrative Cloud is free for all users in the first year (and $9/month after that). Due to the amount and size of the photos you would take day-to-day, the cloud is actually the only reasonable option if you intend to keep all your captures. The Narrative Cloud is also required for the photo post-processing features like tilt-correction, date grouping and location grouping.

Users with slow or limited upload bandwidth are not going to enjoy the fact that you could be uploading gigabytes of photos every few days.

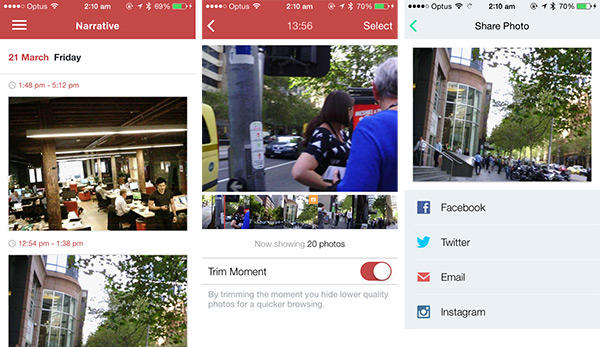

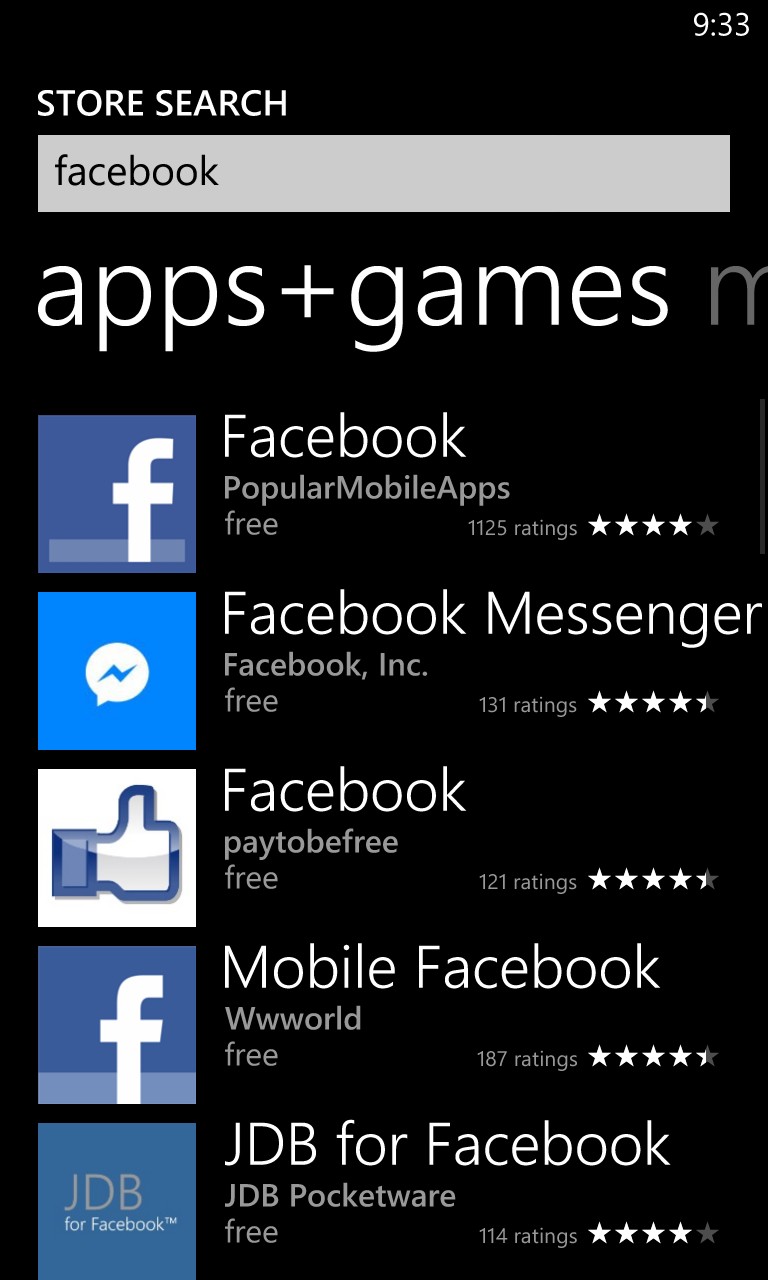

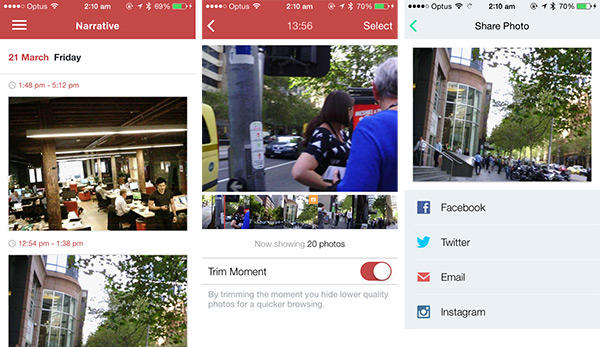

The mobile app, available only on iPhone and Android at the moment, provides the ability to view the post-processed and grouped photos stored in the cloud.

The cloud service tries its best to automatically select a number of photos in each group that it thinks are interesting and represent key “moments” of the day. Whilst this trims down browsing hundreds of photos down down to just a handful, the algorithm is a bit hit and miss.

Each moment (trimmed or in full) can also be played back like a time-lapse, but unfortunately there’s no way to export a video or animated GIF of this. You can share individual photos to Facebook, Instagram, Twitter or email.

The experience

Wearing a Narrative Clip is somewhere between a watch like Pebble and a headset like Glass. It’s not as obvious as a display and camera on your head but someone directly infront of you will definitely still notice a little black box around your neck.

Even though I believe the arguments against wearables for its ability to discretely take photos and video are invalid by the fact that video recording glasses and pens are readily available in the market with much more affordable prices and discreteness, however I do recognise that people behave differently if they are aware they are being recorded which changes the dynamics of social gatherings.

For that reason I think the Narrative Clip is actually a worse experience than Google Glass since the Clip is entirely passive. As long as it is worn, it is always recording at a set interval without any interaction or control, until it is put in a bag or placed face down. A device like Glass on the other hand is barely “on/active” and only takes photos on command.

The funny thing is that I actually felt uncomfortable wearing the Clip myself.

Summary

If you’re willing to upload gigabytes of nondescript photos day after day with the anticipation that you might have captured an interesting Kodak moment while out and about, the Narrative Clip is the gadget to check out.

Wearable technologies have come a long way since 2012 and the Narrative Clip is disappointing at $279. Its simple lifelogging functionality only scrapes the surface of what I have come to expect of a device to be worn day-in day-out.