[flv:msrkinect.f4v 670 380]

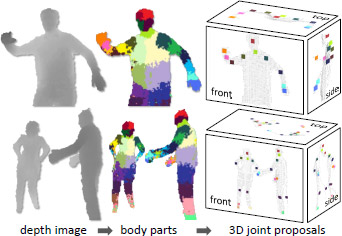

Like a good artistic visualization, you can never get bored of looking at how the Kinect works behind-the-scenes. Of course pretty colors and dancing skeletons is captivating, but the hard science behind just how the Kinect on XBOX 360 can recognize human poses in real-time from a single depth-image is equally fascinating.

To quench the Kinect thirst, Microsoft Research recently released an 8-page research publication to be presented at the IEEE Conference on Computer Vision and Pattern Recognition in June titled “Real-Time Human Pose Recognition in Parts from a Single Depth Image“. The paper reveals a lot of interesting facts, science and data behind the algorithms of Kinect.

Whilst acknowledging previous work in the field, one area the research team focused to improve was per-frame initialization. That is, the system can work without a lengthy “set-up” phase for the user.

Whilst acknowledging previous work in the field, one area the research team focused to improve was per-frame initialization. That is, the system can work without a lengthy “set-up” phase for the user.

The success of their work is of course a key component of Kinect – anyone can hop in to play at any time.

As part of its development, the team collected a database of around 500,000 frames of motion capture data of simulated poses with different people in an entertainment scenario such as driving, dancing, kicking, running and navigating menus.

From that, they generalized the dataset down to 100,000 more unique poses to which the system was trained to estimate body parts from. As an indication of just how computational intensive the development process really was, “training 3 (decision) trees to depth 20 from 1 million images takes about a day on a 1000 core cluster”.

For one I’m glad they spent days with 1000 core clusters so that Kinect can recognize me kicking and jumping at 200 frames per second.

Good Article, thanks!

MSR and one of their researchers (Fitzgibbons) has some prior research in motion video that is actually used in movie production today. Looking back, it’s fascinating watching the evolution and confluence of all these things to help shape Kinect.

The question is when will Windows incorporate Kinect features in the end-to-end experience instead of just an SDK and will the folks who purchased Kinect for Xbox be made to buy a newer incompatible Kinect for Windows?

This is a ridiculous comment. Why it should be incompatible? So, is Windows 7 incompatible with Windows Vista or Windows XP? C’mon!

When the Official SDK will be out for personal use?

Correction: The Kinect can’t actually recognize you kicking and jumping at 200 fps because the camera does not have that high a frame rate. It’s the software running on the Xbox that’s able to interpret the input data at that rate.

I’m going to bet you $100 in 5 years we can come back to this comment and thread and the following will be accurate: the empirical ontology of a human limit is the most powerful concept to touch technology since LSV – Microsoft just has no idea what they actually discovered.

Some Microsoft people do. Jaron Lanier works for Microsoft now, partly due to Kinect: http://www.geekwire.com/2011/virtual-reality-visionary-jaron-lanier-microsoft-gig-kinect-beautiful-exciting

technology really works.